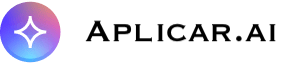

For nearly seven years, the AI infrastructure map had a fixed shape. OpenAI built the frontier models, Microsoft Azure was the only hyperscaler legally allowed to host them, and AWS and Google Cloud competed around the edges. On April 27, 2026, that map was redrawn.

OpenAI and Microsoft renegotiated their partnership to end Azure’s exclusivity. Less than 24 hours later, AWS launched OpenAI’s frontier models — including GPT-5.5 and the Codex coding agent — on Amazon Bedrock in limited preview, with general availability rolling out within weeks.

This is not a routine partnership update. It is the moment multi-cloud AI stops being the exception and starts becoming the default architecture.

1. What actually changed on April 27, 2026

Before

- OpenAI’s API products were exclusive to Microsoft Azure.

- Microsoft held a “first refusal” position on all of OpenAI’s IP through the AGI clause.

- If an enterprise wanted GPT, it effectively had to buy Azure.

After

- OpenAI is free to serve its models on any cloud — starting with AWS, with Google Cloud certification reportedly targeted for Q4 2026.

- Microsoft remains OpenAI’s “primary cloud partner” and keeps a non-exclusive license to OpenAI IP through 2032.

- Microsoft still gets a 20% revenue share through 2030, now subject to an undisclosed cap.

- OpenAI must still ship new frontier models to Azure first, before they appear on competing clouds.

- The controversial AGI clause that would have changed the business relationship once AGI was reached has been scrapped.

In short: Microsoft is no longer the gatekeeper, but it is far from sidelined. Both companies walked away with wins.

2. Why AWS moved in the same week — and why it was ready

AWS did not improvise this. The groundwork was laid across two enormous deals:

- November 2025: A $38 billion, seven-year compute commitment giving OpenAI access to hundreds of thousands of NVIDIA GB200 and GB300 GPUs in Amazon EC2 UltraServers.

- February 2026: A separate $50 billion Amazon investment in OpenAI, paired with a cloud commitment worth more than $100 billion over eight years. Critically, this deal also commits OpenAI to running workloads on AWS’s custom Trainium chips and to co-developing a “Stateful Runtime Environment” on Bedrock.

So when Microsoft’s exclusivity dropped, AWS already had the infrastructure, the contracts, and the integration layer ready. AWS CEO Matt Garman summarized it bluntly at the launch event: enterprise customers’ production applications, data, and security posture already lived in AWS — they had simply been forced to leave that environment to use OpenAI’s best models.

3. What’s actually shipping on Bedrock

Three things launched together:

- OpenAI’s frontier models (including GPT-5.5 and GPT-5.4), callable through the same Bedrock APIs enterprises already use — InvokeModel, Converse, and batch inference — and reusing existing IAM policies, guardrails, and knowledge bases.

- OpenAI Codex, the coding agent, integrated directly into AWS environments.

- Amazon Bedrock Managed Agents powered by OpenAI, an enterprise agent platform that retains memory across interactions. This is the productized form of the “Stateful Runtime Environment” the two companies announced in February.

The architectural significance: OpenAI inference becomes part of AWS infrastructure rather than an external API call. That means lower latency, no cross-cloud egress fees, native AWS security (IAM, PrivateLink, encryption, CloudTrail logging), and one less vendor in the compliance matrix.

4. The Trainium story — promise and reality

The longer-term story is silicon. AWS is not content to be a landlord for Nvidia GPUs. With Trainium3, launched at re:Invent 2025 on a 3 nm process, AWS is making its most credible push yet to break Nvidia’s pricing power.

The honest comparison

| Metric | Trainium3 | NVIDIA Blackwell Ultra (GB300) |

|---|---|---|

| FP8 per chip | ~2.52 PFLOPS | ~5 PFLOPS |

| HBM per chip | 144 GB HBM3e | 288 GB HBM3e |

| System total (max) | 362 PFLOPS (Trn3 UltraServer, 144 chips) | ~540 PFLOPS (GB300 NVL72) |

| Process node | TSMC 3 nm | TSMC 4NP |

| Best at | FP8 training, system-level TCO | FP4 inference, raw per-chip compute |

Per chip, Nvidia still wins — by roughly 2x on raw FP8 throughput. AWS is not pretending otherwise. The pitch is different: Trainium3 reportedly delivers about 30% better TCO per marketed FP8 performance than GB300 NVL72 (per SemiAnalysis), with 4x better energy efficiency than the previous generation. At FP4 inference, however, Nvidia’s lead is much wider.

What this means strategically

AWS is running the same playbook Apple ran with Apple Silicon: design the chip, own the cloud, host the models, sell the platform. Trainium will not displace Nvidia for every workload — and OpenAI’s $38B AWS deal is still primarily Nvidia GPUs. But for high-volume inference and for training runs where energy and total cost matter more than peak per-chip compute, Trainium gives AWS margin headroom that Azure and Google Cloud have to match either with TPUs (Google) or by paying Nvidia retail (Microsoft).

Project Rainier — a 500,000-Trainium2-chip cluster training Anthropic’s Claude models — already proved Trainium can run frontier-scale workloads in production. With OpenAI now contractually committed to Trainium under the February deal, AWS has its second anchor tenant.

5. What this really means for Microsoft

The narrative that Microsoft “lost” is too simple. Microsoft traded exclusivity for cash certainty and product autonomy.

What Microsoft gave up

- Sole hosting rights for OpenAI’s commercial products.

- The unique enterprise lock-in argument: “you have to buy Azure to get GPT.”

- The AGI-trigger clause that would have altered the business relationship.

What Microsoft kept (or gained)

- Non-exclusive IP license through 2032 — six more years of guaranteed access.

- 20% revenue share through 2030 (capped, but still likely worth billions).

- “First-shipping” rights: new OpenAI frontier models still debut on Azure before any other cloud.

- Stops paying revenue share back to OpenAI for Azure-served models.

- Copilot, Bing, and Microsoft 365 integration — the consumer and productivity surface area that makes OpenAI most useful to most enterprises.

Microsoft moves from “the only AI cloud” to “the most integrated AI cloud.” That is a downgrade in narrative but not necessarily in revenue.

6. Google Cloud, Anthropic, and the rest of the field

Google Cloud is reportedly studying the new contract terms to see what is possible. Whether or not OpenAI lands on GCP, Google’s strategy stays the same: differentiate on Gemini and TPUs rather than depend on someone else’s models.

Anthropic is the quiet winner. Amazon doubled down on its original AI partner just weeks before the OpenAI deal, with up to $25 billion in additional investment and a $100 billion-plus cloud commitment of its own. Bedrock now hosts both Claude and GPT side by side — an unusual position that lets AWS sell “model neutrality” as a feature.

Alibaba Cloud and the Chinese hyperscalers are largely insulated from this Western reshuffle. Their game is sovereignty and the domestic model stack.

7. The real shift: from model access wars to infrastructure efficiency wars

For three years, enterprise AI procurement was dominated by one question: which cloud has the model I need?

That question is now obsolete. The new question is: which cloud runs the model I want with the best price, latency, governance, and tooling?

Competition shifts to dimensions that are much harder to fake:

- Custom silicon — Trainium vs. Nvidia vs. TPUs vs. whatever Microsoft’s Maia chips become.

- Orchestration layers — Bedrock vs. Azure AI Foundry vs. Vertex AI.

- Cost per token at scale, especially for inference.

- Multi-model agent platforms — the new Bedrock Managed Agents is the opening shot here.

- Enterprise governance — observability, evals, fine-tuning pipelines, hybrid deployments.

8. Why AWS is structurally well-positioned — but not invincible

AWS enters this new phase with a rare combination:

- The largest cloud footprint globally, where most enterprise data already lives.

- A genuinely multi-model platform (OpenAI, Anthropic, Meta, Mistral, Cohere, Amazon’s own Nova) inside one orchestration layer.

- Custom silicon that is competitive on TCO even if not on per-chip peak performance.

- Deep enterprise tooling — IAM, VPC, compliance — that is hard to dislodge.

The risks are real, though. Per-chip performance still favors Nvidia, and CUDA’s software moat remains the deepest in the industry. Microsoft retains the consumer and productivity surface where AI gets used most. And OpenAI itself now has every incentive to play hyperscalers against each other on price.

Final takeaway

The April 2026 OpenAI–AWS launch is a turning point — but not the one most headlines suggest. Microsoft did not lose; it traded a monopoly position for a more sustainable one. AWS did not “win” OpenAI; it bought a seat at the table for $38 billion plus another $50 billion in equity. And Trainium did not displace Nvidia; it earned the right to keep competing.

What actually ended is the era when access to a single model could define cloud strategy. From here on, the AI cloud market will be decided by infrastructure efficiency, multi-model orchestration, and silicon economics. That is a much harder game than exclusivity — and a much more interesting one to watch.

Sources: AWS, Reuters, CNBC, GeekWire, TechCrunch, Axios, The New Stack, SemiAnalysis, Tom’s Hardware (April–May 2026 reporting and re:Invent 2025 disclosures).